Stephen L. Carter: Meta and Alphabet's courtroom losses may not last

Published in Business News

Social media biggies have taken it on the chin from juries this week. And although, for reasons I’ll get to, I’m skeptical that the verdicts will hold up on appeal, I do think there are some important lessons here.

The cases themselves are, by now, well known. On Tuesday, a jury in New Mexico awarded $375 million against Meta Platforms Inc. for harm that its products allegedly cause to children and teens. The next day, a California jury handed down a $6 million judgment against Meta and Alphabet Inc.’s Google for allegedly creating the plaintiff’s social media addiction, which in turn led to broader mental health challenges.

If nothing else, the outcomes of these trials illustrate the scope of national anger at Big Tech generally, and social media companies in particular. Literally thousands more lawsuits are pending.

But I’m not sure the money will ever get paid. On appeal, the principal battle will be whether Meta and Alphabet fall within the safe harbor protections of Section 230 of the Communications Decency Act of 1996, which immunizes social media companies from most forms of liability for content created by third parties.

In the trials that ended this week, and in dozens of pending cases around the country, plaintiffs have tried to get around Section 230 by arguing, for example, that the companies have made false and deceptive statements to the public, and that they’ve created a product designed to addict their users. The arguments aren’t bad — some are quite clever — but my realist side still suspects that the defendants will win in the end.

Exhibit One: In recent years, the US Supreme Court has been noticeably skeptical of efforts to impose expansive liability on companies that primarily connect users to other users’ content. The justices have been unsympathetic to efforts to tag the social media giants for the activities of their users, no matter what knowledge the companies are alleged to have had, even when YouTube was alleged to have indirectly (and accidentally) given a share of advertising revenue to ISIS. (Please note: alleged.)

If there’s any doubt about where the justices’ sympathies lie, a less-remarked decision this week serves as a reminder. In Cox Communications v. Sony Music Entertainment, decided on Wednesday, the Supreme Court ruled unanimously that an internet service provider is not liable for providing services to known copyright infringers. The statutes involved are, of course, quite different, but however distant the melody, the rhythm is familiar.

The ISPs, too, have their safe harbor, a provision of the Digital Millennium Copyright Act that expressly protects them against certain copyright infringement claims. The justices rejected the argument that, because the particular claim brought by the plaintiffs isn’t listed among those safely harbored, that omission means the suit should proceed.

I’d expect the justices to cast the same skeptical eye on the claim that Section 230’s safe harbor doesn’t apply to Meta and Alphabet. To be clear, I am expressing no view on whether the social media companies should be liable. Rather, I am predicting what the Supreme Court will do when the cases get there. Even if we should judge that skepticism to be a mistake, the skepticism still exists.

But maybe I’m wrong. Let’s assume, for the sake of argument, that the effort by the plaintiffs’ lawyers to find a path around Section 230 succeeds. The liability shield vanishes. Does that mean the verdicts should stand?

Maybe. Maybe not. My libertarian side believes in resolving most harms in jury trials rather than, say, through regulatory processes. But science can be tough for juries (and judges!) to comprehend, and the extensive scholarship on how well it’s explained or understood by judges or juries suggests that the answer is “poorly.”

I wasn’t in either courtroom and didn’t follow the trials day by day, so I can’t evaluate the evidence presented on addictiveness or emotional and mental harm. What one can safely say is that these are matters of enormous complexity. We aren’t being unsympathetic toward those facing mental health challenges when we note that the evidence of causation is sharply disputed and, among serious researchers, the key questions remain far from settled. Even the definition of addiction is seriously contested.

None of that is meant to get Meta and Alphabet off the hook. As I said, I wasn’t present for the trials. But, as I will be telling the students in my evidence class next week, there are few, if any, legal scholars (and I’d bet, fewer scientists) who imagine that a jury trial is the path toward uncovering scientific truth.

But even if one thinks a legislative solution is more sensible, how on earth does one get Congress to act? One thoughtful suggestion is that a few big jury verdicts — which in turn signal a judicial narrowing of the safe harbor provisions of Section 230 — would spur Big Tech into a round of fierce lobbying that might in turn lead Congress to adopt new legislation clarifying these tough questions.

In that sense, Meta CEO Mark Zuckerberg was entirely rational this week in accepting an invitation to join President Donald Trump’s Council of Advisors on Science and Technology. Nevertheless, when these lawsuits inevitably reach the Supreme Court, I’d be surprised if the justices chose to wait for either legislative action or the whim of our executive-orderer-in-chief.

Which leads to a final point.

I spend almost no time on social media, but I recognize that my disinterest leaves me seriously out of step with contemporary society. Still, among regular users, I detect a variety of affective responses. The same user might love staying in touch and hate the habit that’s formed, might love following politics through others’ posts and hate that this is what democracy has become. It’s our national Rorschach; the testing is constant, and everybody seems to wind up mad.

Social media takes it on the chin for lots of things these days: depression among teens, the rise of the conciliatory left, the rise of the vengeful right, the enshrinement of tl;dr as a justification for not applying oneself to the task of comprehending complex issues, the collapse in consumption of serious news — if there is a problem in the world today, chances are somebody has an explanation for how social media is to blame. Maybe the claims are all bunk. Maybe some or even most of the blame is deserved. Either way, I think the courtroom isn’t the forum to resolve them.

Oh — and neither is arguing on social media.

____

This column reflects the personal views of the author and does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

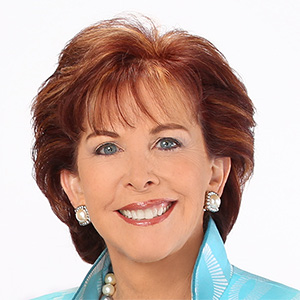

Stephen L. Carter is a Bloomberg Opinion columnist, a professor of law at Yale University and author of “Invisible: The Story of the Black Woman Lawyer Who Took Down America’s Most Powerful Mobster.”

©2026 Bloomberg L.P. Visit bloomberg.com/opinion. Distributed by Tribune Content Agency, LLC.

Comments